Researchers have created smart glasses that convert visual data into distinctive sound representations, improving the ability of the visually impaired people to navigate their environment. The technology is inspired by the echolocation skills of bats. For those who are visually impaired, technology may change their entire life.

Creating technologies with the goal of helping people with sensory impairments to overcome obstacles in their daily lives is known as assistive technology. Being visually impaired or having low vision (LV) makes it difficult for a person to carry out daily tasks and participate in social interactions.

The use of auditory, haptic/tactile, and visual feedback to enhance senses is a broad area of research in assistive technology. To help LV people “see,” researchers at the University of Technology Sydney (UTS) have created next-generation smart glasses that convert visual information into distinct sound icons. This technology is known as “acoustic touch.”

According to Chin-Teng Lin, a co-author of the study, “smart glasses typically use computer vision and other sensory information to translate the wearer’s surroundings into computer-synthesized speech.” Acoustic touch technology, on the other hand, sonifies objects and generates distinct sound representations when they come into the device’s field of view. For instance, rustling leaves could indicate a plant, and buzzing sounds could indicate a cell phone.

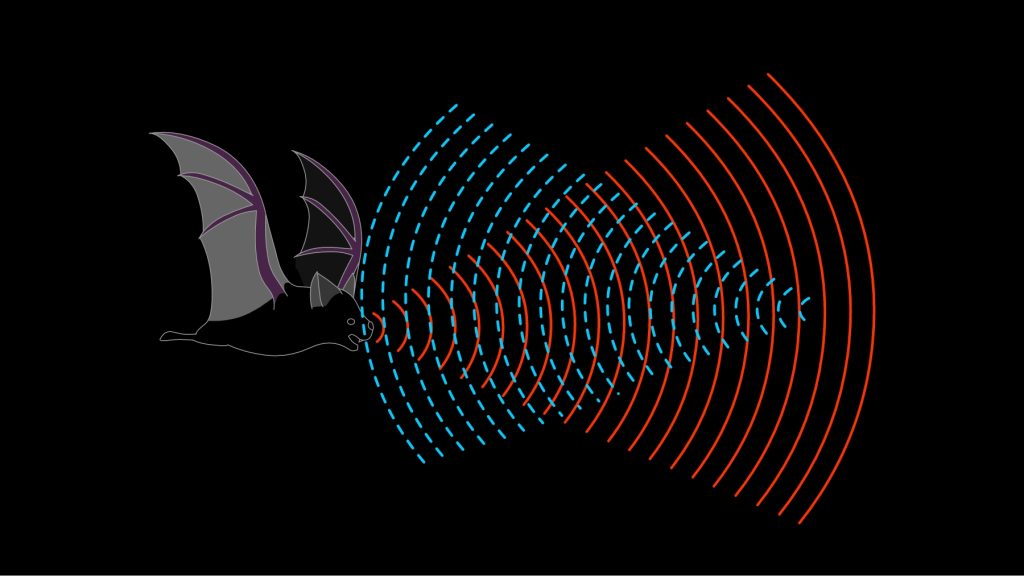

The researchers named their smart glasses Foveated Audio Devices (FADs) after the way bats use echolocation, which involves emitting a sound wave that bounces off an object and returns an echo that indicates the object’s size and distance.

The FAD included an Android phone, the OPPO Find X3 Pro, and a pair of augmented reality glasses. The audio input and camera/head tracking output of the glasses were controlled by the Unity Game Engine 2022. When objects entered the field of view of the device, the combination of these factors allowed the FAD to transform them into unique sound icons.

Fourteen adult participants were used in the study: seven LV individuals and seven blindfolded sighted individuals who served as controls. The study was divided into three stages: training, a seated task in which participants used the FAD to sonify and scan objects on a table, and a standing task that examined how well the FAD performed when participants were moving around a cluttered area and looking for an item. In this study, four objects were used: a bottle, bowl, book, and cup.0

They discovered that the wearable device significantly improved LV individuals’ ability to recognize and reach for objects without exerting too much mental effort.

“The auditory feedback empowers users to identify and reach for objects with remarkable accuracy,” said Howe Yuan Zhu, the study’s lead, and corresponding author. “Our findings indicate that acoustic touch has the potential to offer a wearable and effective method of sensory augmentation for the visually impaired community.”

With some tweaking, acoustic touch technology could become an integral part of assistive technologies, allowing LV people to better access their surroundings.