As governments push forward to leverage technology to fight COVID-19, we should be fully aware that this integration can and probably will open the floodgates for technologies that will directly impact our society in ways that are much more profound than the pandemic.

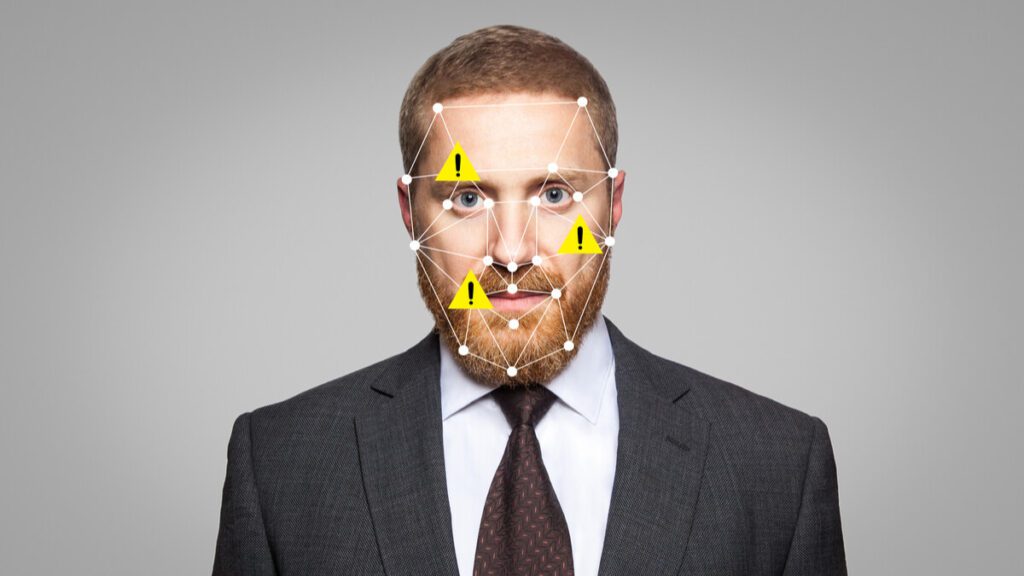

AHA, facial recognition technology

These are systems that scan images and videos for people’s faces, and either attempt to classify them or make assessments of their character.

Recent updates from the world’s leading technology and surveillance firms enables the identification of people while they are wearing masks, and with high accuracy.

In parallel, thermal imaging tech is also being proposed to identify potential coronavirus carriers by measuring their temperature. Recently, London Heathrow Airport announced that it will be implementing this technology for large-scale passenger temperature checks.

Some governments (China, Russia, India, and South Korea) are taking it a step further and can now identify patients with higher temperatures, revisit their location history via automated analyses of CCTV footage, and can then directly identify and even notify people who might have been exposed to the virus. And yes, while all this does sound amazing and can be seen as a positive direction to rid societies of COVID-19, we must stop and ask ourselves – at what cost?

The enormous uptake and rapid adoption of contact tracing apps around the world is a sign that most of us seem willing to compromise our privacy in order to manage the pandemic.

The more serious concern here is the unanswered legal, democratic and constitutional questions that arise with this technology to fight COVID-19.

False positives

These new facial recognition technologies fail to realize the significant risk of discrimination and abuse, namely the probability of false positives.

False positives are more common than you might think, and they are due to several reasons. The imaging equipment could be damaged, broken, or used incorrectly. Human reviewers can misinterpret readings, or what is worse, an automated process can which one has no control over.

What else can facial recognition do?

The use of facial recognition systems as a technology to fight COVID-19 has other potential uses (and criticisms) as well.

Studies indicate that the practice of categorizing and classifying humans according to visible differences has a deeply troubled past with roots in eugenics – a set of beliefs that advocate notions like selective breeding. This movement and its ideology have proven to be very dangerous.

Where do we go from here?

The pandemic has clearly illustrated that we’re at a crossroads. On the one hand, technology to fight COVID-19 is being developed and used to fight and mitigate the spread of the disease. On the other hand, we might be digging our own graves by releasing oppressive systems into our society that are ultimately not in the best interest of public life.

The team at the Interaction Design Lab have developed Biometric Mirror, a speculative and deliberately controversial facial recognition technology designed to get people thinking about the ethics of new technologies and AI.

After thousands of people from all different walks of life tried their app, they found out there is a lack of widespread public understanding/awareness regarding the way facial recognition works, its limitations, and most importantly how it can be misused.

Worrying, don’t you think?