This year has been a roller coaster ride for the United States, from the emergence and swift spread of the Covid-19 pandemic to dreadful natural disasters, and the ongoing trade war the U.S. has been waging against China.

The main headline that has dominated the news and even shaped the narrative of the U.S. presidential elections is racism. Following the killing of George Floyd due to police brutality, a massive wave of protests touched almost every city, calling for the end of racism in America.

Even during the protests, violence erupted far and wide between riot police and demonstrators due to increased tensions that later lead to local police individually targeting activists when the storm weathered.

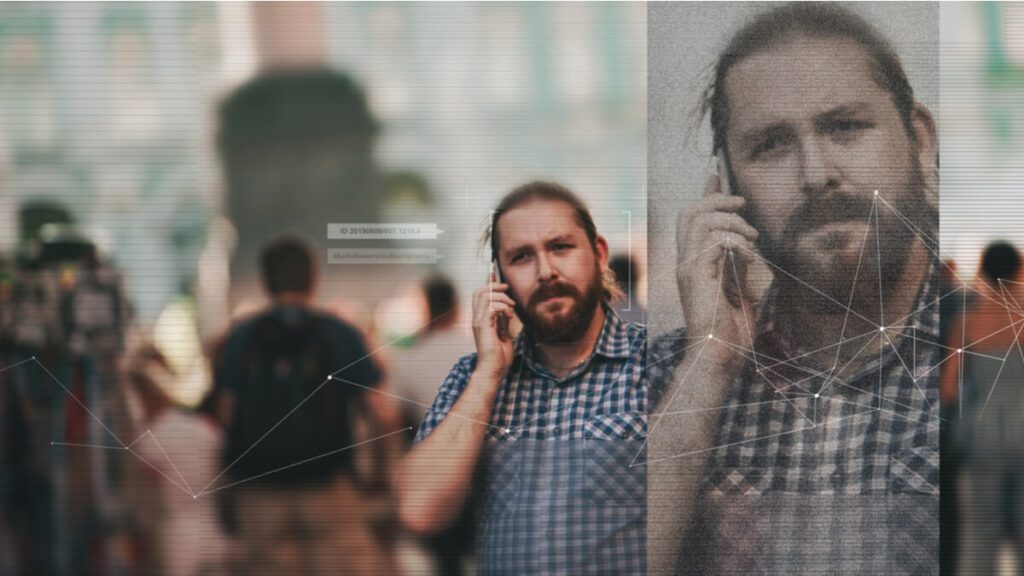

But how? How were local police able to recognize or even target specific individuals within an ocean of people? The answer is simple: Artificial Intelligence (AI) and facial recognition.

The malicious use of the technology was even noticed by tech giants far and wide, to the extent where companies such as Microsoft, Amazon and IBM publicly announced they would no longer allow police departments access to their facial recognition technology.

Microsoft President Brad Smith was widely quoted as saying his company wouldn’t sell facial-recognition technology to police departments in the U.S., “until we have a national law in place, grounded in human rights, that will govern this technology.”

It has became common knowledge that inventors of the tech including Alphabet, Amazon, Facebook, IBM and Microsoft – as well as individuals like Stephen Hawking and Elon Musk – believe that now is the right time to talk about the nearly boundless landscape of artificial intelligence.

What’s concerning to these companies is AI’s liability to making errors, particularly in recognizing people of color and those in other underrepresented groups.

The reality of now

According to a survey done by Capgemini, a Paris-based technology services consulting company, overall, 65 percent of executives said, “they were aware of the issue of discriminatory bias” with these systems, and several respondents said their company had been negatively impacted by their AI systems.

“Six-in-10 organizations had attracted legal scrutiny and nearly one-quarter (22 percent) have experienced consumer backlash within the last three years due to decisions reached by AI systems,” the survey highlighted.

In parallel, cause for concern can be found in companies that lack employees responsible for the ethical implementation of AI systems, despite backlash, legal scrutiny, and awareness of potential bias.

“About half (53 percent) of respondents said that they had a dedicated leader responsible for overseeing AI ethics. Moreover, about half of organizations have a confidential hotline where employees and customers can raise ethical issues with AI systems,” the survey added.

Be that as it may, there are high consumer expectations with regards to AI and its accountability within companies; Nearly seven-in-10 expect a company’s AI models to be “fair and free of prejudice and bias against me or any other person or group.”

In parallel, 67 percent of customers said they expect a company to “take ownership of their AI algorithms” when these systems “go wrong.”

Learning from Singapore

Many have praised the actions and steps that Singapore has taken with their adoption of artificial intelligence, as the country has played it right since the vey beginning.

The country launched a reference guide for AI use within the country to maintain its ethical use on both business and consumer ends. The AI Ethics & Governance Body of Knowledge (BoK) provides a reference guide for business leaders and IT professionals on the ethical aspects related to the development as well as deployment of AI technologies.

The book, which was powered by industry group Singapore Computer Society (SCS),was put together based on the expertise of more than 60 individuals from multi-disciplinary backgrounds, with the aim to aid in the “responsible, ethical, and human-centric” deployment of AI for competitive advantage.

The BoK was developed based on Singapore’s latest Model AI Governance Framework, which was updated in January 2020, and will be regularly updated as the local digital landscape evolves, SCS said during its launch.

Many experts from both the private and public sectors have hailed this step as being the ideal model for carefully outlining the positive and negative outcomes of AI adoption, and looks at the technology’s potential to support a “safe” ecosystem when utilized properly.

The wrong side of Pandora’s Box

First thing’s first, AI ethics do not come in a box with your order.

Take a moment to think about how many uses AI already has and will have later down the line as we edge closer to 5G.

There are varying values for different companies across a multitude of industries, which is why data, and an AI ethics program must be tailored to specific businesses along with regulatory needs that are relevant to the company.

Let’s examine a few problems that would and have already surfaced.

1. Affecting our behavior and interactions

It is a natural thing that AI-powered bots have become better and better at emulating human conversations and relationships.

The case can be made with a bot named Eugene Goostman.

Back in 2015, Goostman was able to win the Turing Challenge for the first time, which is a challenge that places humans to chat via text with an unknown entity and then guess if the entity was human or a machine.

The AI was successfully able to fool more than half of the participants into thinking that they had been talking to an actual human being.

This is somewhat scary, since this achievement is merely the beginning of an age that looks to increase interactions between humans and well-spoken machines, especially for customer service or sales.

“While humans are limited in the attention and kindness that they can expend on another person, artificial bots can channel virtually unlimited resources into building relationships,” a report by the World Economic Forum (WEF) highlighted.

This has already been seen through different means with the ability to trigger the reward centers in the brain without us being exactly aware of it; just think about the myriad of click-bait headlines we seamlessly scroll through.

“These headlines are often optimized with A/B testing, a rudimentary form of algorithmic optimization for content to capture our attention. This and other methods are used to make numerous video and mobile games become addictive,” WEF explained.

Which is a big cause for concern since tech addiction is slowly but effectively becoming the new frontier of human dependency.

2. Bias in AI

It is a known fact that AI is much faster at processing information than human capacity, but that doesn’t mean it’s fair and neutral.

A known pioneer of AI solutions and tech is Google, with its most basic example being its flagship Google Lens feature, that allows your smartphone camera to identify objects, people, locations, scenes and many more.

This feature in and of itself holds great power that could be twisted in both extremes of the moral spectrum. Missing the name of an object is one thing but missing a mark on racial sensitivity is another.

An AI system that mistakes a Samsung for a OnePlus is no big deal, but a software that’s used to predict future criminals showing bias against black people is something completely different.

Which is why the role of human judgment will prove to be integral to how the technology should be used.

“We shouldn’t forget that AI systems are created by humans, who can be biased and judgmental. Once again, if used right, or if used by those who strive for social progress, artificial intelligence can become a catalyst for positive change,” the report by WEF said.

3. Guarding AI from the forces of evil

As technology evolves with a primary reason to aid humanity, there will always exist some who seek to use it to wreak havoc on others.

These fights won’t take place on the battlefield but in cyberspace, making them even more damaging due to their ability to individually tap into anyone.

With this in mind, cybersecurity’s importance will become paramount in the battles to come; after all, we’re dealing with a system that is faster and more capable than us by orders of magnitude.

4. Keeping humanity at the wheel

Humans have always been nature’s apex predators; our dominance is a mere physical one, but that of ingenuity and expanding intelligence.

We can get the better of bigger, faster, stronger animals because we can create and use tools to control them: both physical tools such as cages and weapons, and cognitive tools like training and conditioning.

However, since AI is miles ahead when it comes to handling different outcomes, it poses a serious question that must be answered sooner rather than later.

Will it, one day, have the same advantage over us?

Humanity needs to find an alternative solution to stop if this case develops, since simply pulling the plug won’t be enough to halt an advanced machine that could anticipate this move and attempt to defend itself.

As we continue to evaluate the risks that accompany such life-changing tech, humanity must stop itself from asking more questions and attempt to define and act upon the counter measures that assure us of a cleaner approach to tech.