As governmental entities abuse social media platforms’ influence on a universal scale, Facebook released on Wednesday a report highlighting its takedown on global disinformation networks promoting false information to manipulate the public’s opinion.

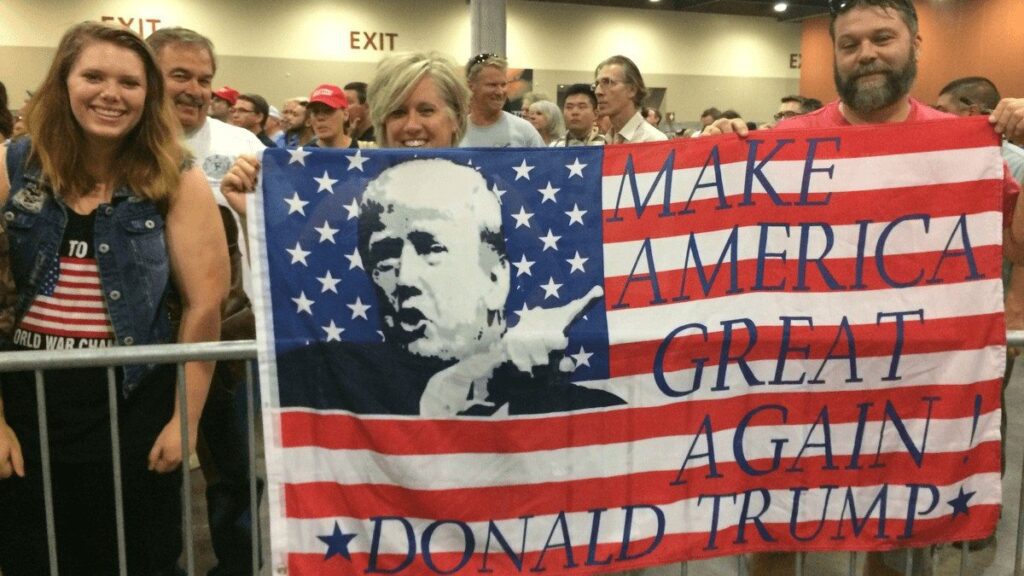

One thing the January 6 Capitol riot uncovered is that social media platforms, especially Facebook, have an influential effect on shaping the public’s opinion concerning various topics, such as COVID-19 vaccinations, elections, China, Europe, and much more.

These platforms have become a digital battlefield for different parties to fulfill their desires of manipulating the generalized conceptualization on specific issues. For instance, according to the Big Tech giant’s own publicized reveal, Russia is one of the leading players who weaponized the platform to impose social pressure.

Meta informed the world of its efforts to reshape its network by extracting fake and confrontational accounts from its social networking platform. In its report, it demonstrated its latest approaches to eliminating multiple networks for their Coordinated Inauthentic Behavior (CIB) leading to global disinformation.

“Today, we’re sharing our first report that brings together multiple network disruptions for distinct violations of our security policies: Coordinated Inauthentic Behavior and two new protocols – Brigading and Mass Reporting. We shared our findings with industry peers, independent researchers, law enforcement, and policymakers so we can collectively improve our defense. We welcome feedback from external experts as we refine our approaches and reporting,” Meta’s Head of Security Policy, Nathaniel Gleicher, said in the report.

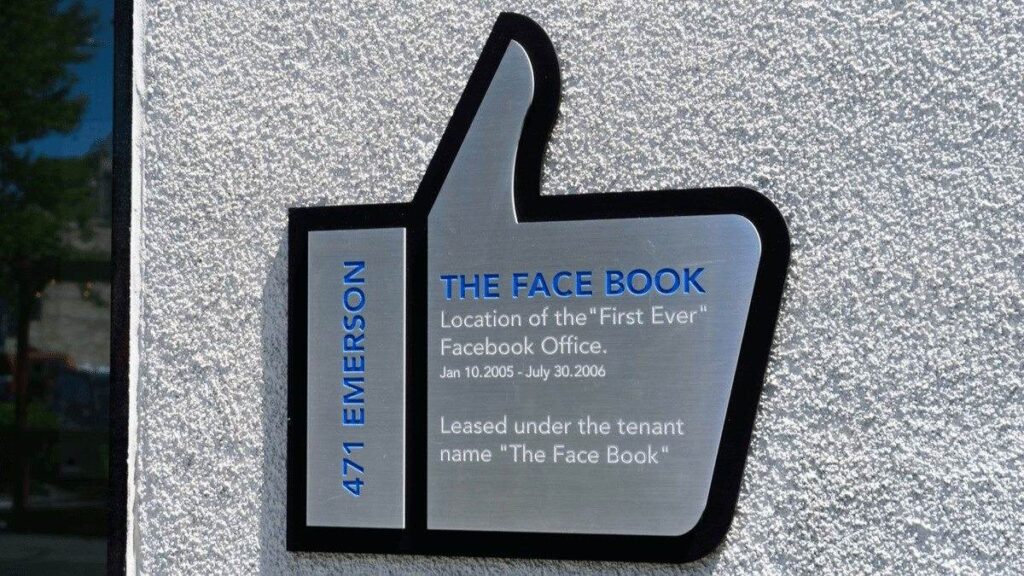

Meta’s first move to restructure its platform’s strategic reforms to fight misleading campaigns emerged to the scene after Russia’s 2016 U.S. election manipulation. Following this, the tech giant initiated a program to extract hundreds of sinister political establishments, marketing firms, governmental and profit-driven entities from its platform.

Fast forward to the present time, Meta’s methodology of tackling how misinformation reshapes societal conceptualization has drastically changed, but it is not enough. While the company seeks to empower its strategies to fight these situations, the threat landscape has also evolved wholly in the past five years.

The emergence of the digital age and the reshaping of journalistic tactics have changed with it. Now, governments are tooled with the option of outsourcing their misleading informational efforts to expand private and global disinformation for hire firms – entities who pay an oblivious journalist to cover particular topics – with false profiles actualized by artificial intelligence (AI).

AI played a critical role in modeling the next generation of global disinformation to create social pressure, and Facebook has intentionally overlooked the influence its super-intelligent machine has on a societal level.

The social networking titan does not reveal the intensity of the spread of misinformative campaigns, an aspect preventing viewers from identifying the subliminal effect of these campaigns. Instead, it only exposed accounts and followers extracted from its platform, but never the number of views on the misleading post.

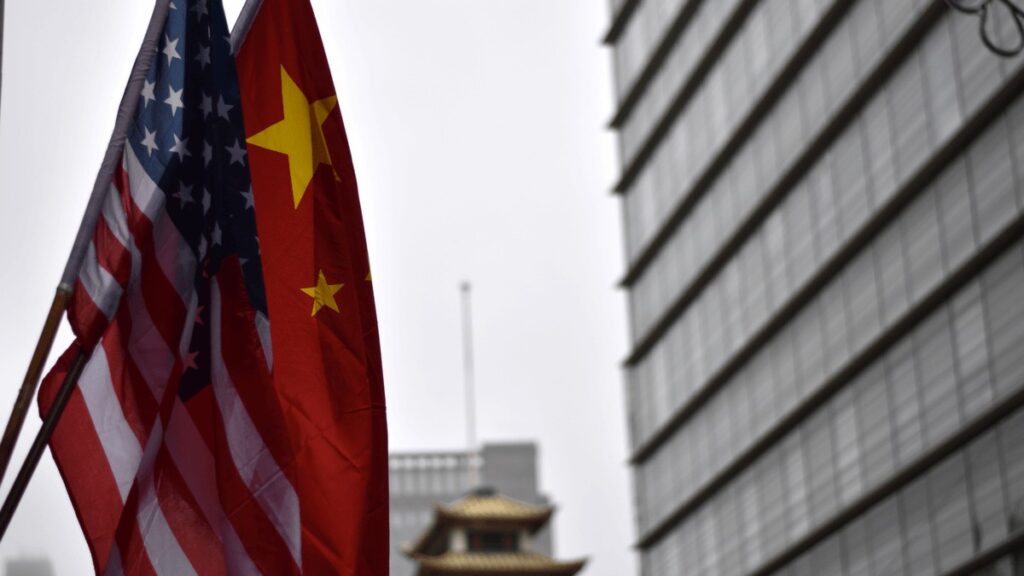

In reference to the report, Meta revealed that a China operation was uncovered after an identified profile claimed to be a Swiss biologist, posting that the U.S. was influencing by intimidation the World Health Organization scientists. The account posted content stating the U.S. was using this approach to shift the blame for the coronavirus by blaming China for the pandemic.

The profile was created only a day prior to its first post, and Chinese state-controlled media organizations, Global Times and People’s Daily, immediately issued the fake news. Once the news reached Facebook, the company initiated its own investigation on the issue, and ultimately connected the account to a network of hundreds of different false personnel related to individuals in China, with the most prominent one being a state-owned infrastructure firm.

From there, governmental gazes directed its scrutinizing eye on Meta and similar platforms, with Facebook whistleblower Frances Haugen breaking its silence on how the company is intentionally disregarding the damaging effect it has on shaping social opinion on distinct companies, explicitly ones the giant considers vital in heightening its business interest.

The former product manager testified to Congress in early October for the first time, with its second hearing taking place on Wednesday. In its testimony to the House Energy and Commerce subcommittee, Haugen highlighted the necessity of taking action against Facebook’s misconduct towards its users.

The second Congressional hearing focused on probable reforms for such social media platforms, specifically Section 230 of the Communications Decency Act.

First structured in 1996, the law protects online platforms from holding the responsibility of each user’s conduct.

“This committee’s attention and this Congress’ action are critical,” Haugen said in her second testimony’s opening statement, while emphasizing the vitality of being attentive when restructuring the law, as it could hold immense repercussions.

“As you consider reforms to Section 230, I encourage you to move forward with your eyes open to the consequences of the reform. Congress has instituted carve-outs to Section 230 in recent years. I encourage you to talk to human rights advocates who can help provide context on how the last reform of 230 had dramatic impacts on the safety of some of the most vulnerable people in our society but has been rarely used for its original purpose,” she added.

The Congressional hearing began with Democratic Pennsylvania U.S. Representative Michael Doyle, recognizing the fundamental societal challenges caused by these platforms while highlighting the vitality of Section 230. He further added that governmental interpretations of the act must endure some significant changes to be applied to social networking companies that have emerged in the past decade.

However, throughout the entirety of the hearing, the committee did not thoroughly discuss these amendments or even any probable legislative approach to alter Section 230. While numerous members of Congress expressed the necessity to make these modifications, it appeared that there was modest opinionated unity as to what efforts should be taken to initiate bipartisan action.

One of the speakers called out to give their testimony was Common Sense Media CEO Jim Steyer, who expressed his frustration from the committee’s stance towards minimizing the spread of disinformation.

“I would like to say to this committee, you’ve talked about this for years, but you haven’t done anything. Show me a piece of legislation that you passed. 230 reform is going to be very important for protecting kids and teens on platforms like Instagram and holding them accountable and liable. But you also as a committee have to do privacy, antitrust and design reform,” Steyer expressed.

Social media companies, explicitly Meta and its platforms, have played a decisive role in restructuring these firms’ power hold on societies. Past events have demonstrated the length of their influence on a societal level and the outrageous consequences that could arise from authoritarian neglect, or disregard to address their ever-growing supremacy on a global level.